Human-Centered AI Model Evaluation & Conversation Design for a Global Technology Leader

Client Background

A global technology leader building AI-powered products for millions of users engaged Akraya to bring human-centered conversation design expertise to their model evaluation process.

Challenges Faced

This section outlines the core difficulties and pain points the client was experiencing. It provides context on the hurdles that needed to be overcome before achieving the successful outcome.

AI Models Lacked Human Nuance in Conversation

Automated metrics and classification systems could flag potential issues at scale but missed subtle cues like user frustration, confusion, or delight.

No Standardized Conversation Design Metrics

Teams lacked a unified framework to measure how well AI models handled dialogue from greeting to resolution.

Fragmented Ownership Across Disciplines

Issues uncovered during evaluation often spanned policy, UX, engineering, and content strategy, yet no single role had end-to-end visibility.

Akraya’s Strategic Solution

We orchestrated a solution to embed human judgment and design thinking into AI model evaluation -

-

Human-Led Transcript Review Engine

Established systematic review of AI conversation transcripts across multiple models, applying conversation design principles to identify gaps in naturalness, empathy, and task completion.

-

Custom Metrics & Evaluation Framework

Designed and implemented a structured set of conversation quality metrics tailored to each AI model’s use case.

-

Cross-Functional Issue Escalation & Resolution

Acted as a bridge between policy, UX, engineering, and content strategy teams, ensuring that identified issues whether design flaws, policy misalignments, or technical bugs were routed to the right owners.

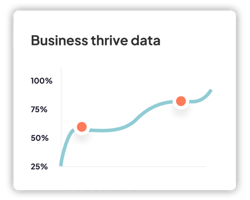

Measurable Outcomes

Operational

Created standardized evaluation framework that enables consistent tracking of AI model performance across multiple versions.

Financial

$23.6M annualized efficiency value saved by reducing time spent on manual, uncoordinated debugging.

Business

Enhanced User Experience across all AI products helped improve the overall user satisfaction and trust.

Conclusion

Akraya embedded conversation design expertise into AI model evaluation, bridging the gap between machine-scale analysis and humancentric quality. By defining measurable metrics, conducting systematic transcript reviews, and facilitating cross-functional resolution, we enabled the organization to launch more natural, trustworthy AI experiences.